Chrome's 'Secure' indicator was designed to make users proceed on any HTTPS site

Research pushes users to continue rather than understand

July 12, 2021

Data backed decision making has been described as 'part of Google's DNA'. It's defined as:

an approach that values decisions that can be backed up with verifiable data. The success of the data-driven approach is reliant upon the quality of the data gathered and the effectiveness of its analysis and interpretation.

People are expected to be able to build products informed by relevant research. This is particularly relevant for browsers, where complex topics of security - confidentiality, integrity, availability, authenticity, accountability, non-repudiation and reliability - need to be effectively communicated to users whose attention is typically elsewhere - i.e., on the content of the page.

There's been a lot of discussion on Twitter recently concerning browser security indicators, and the general sorry state of online security. Troy Hunt's article gives some excellent insight into the motivations and pressures within the industry right now.

I'd like to raise attention to one point in particular:

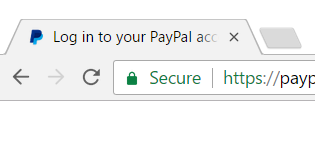

It sounds ridiculous, but you can verify this yourself in section 7.2 of Rethinking connection security indicators, A. P. Felt. et al, 2016. This is the research behind the current 'Secure' marker that appears on all HTTPS sites since Chrome 53:

In section 7.2 three questions are asked:

- How safe would you feel about the current website?

- If you saw the below icon and message in the browser’s address bar, that would be that someone might... (list of bad things)

- If you came across a site in your browser and saw this in the address bar, how would you most likely proceed?

Yes, the research was based on which indicators would encourage users to feel safe and proceed, rather than to understand what they're connecting to and judge accordingly.

This was used to come to the following conclusions, in Chapter 7.3.1:

We find that “secure” and “https” are the most promising companions to a green lock icon.

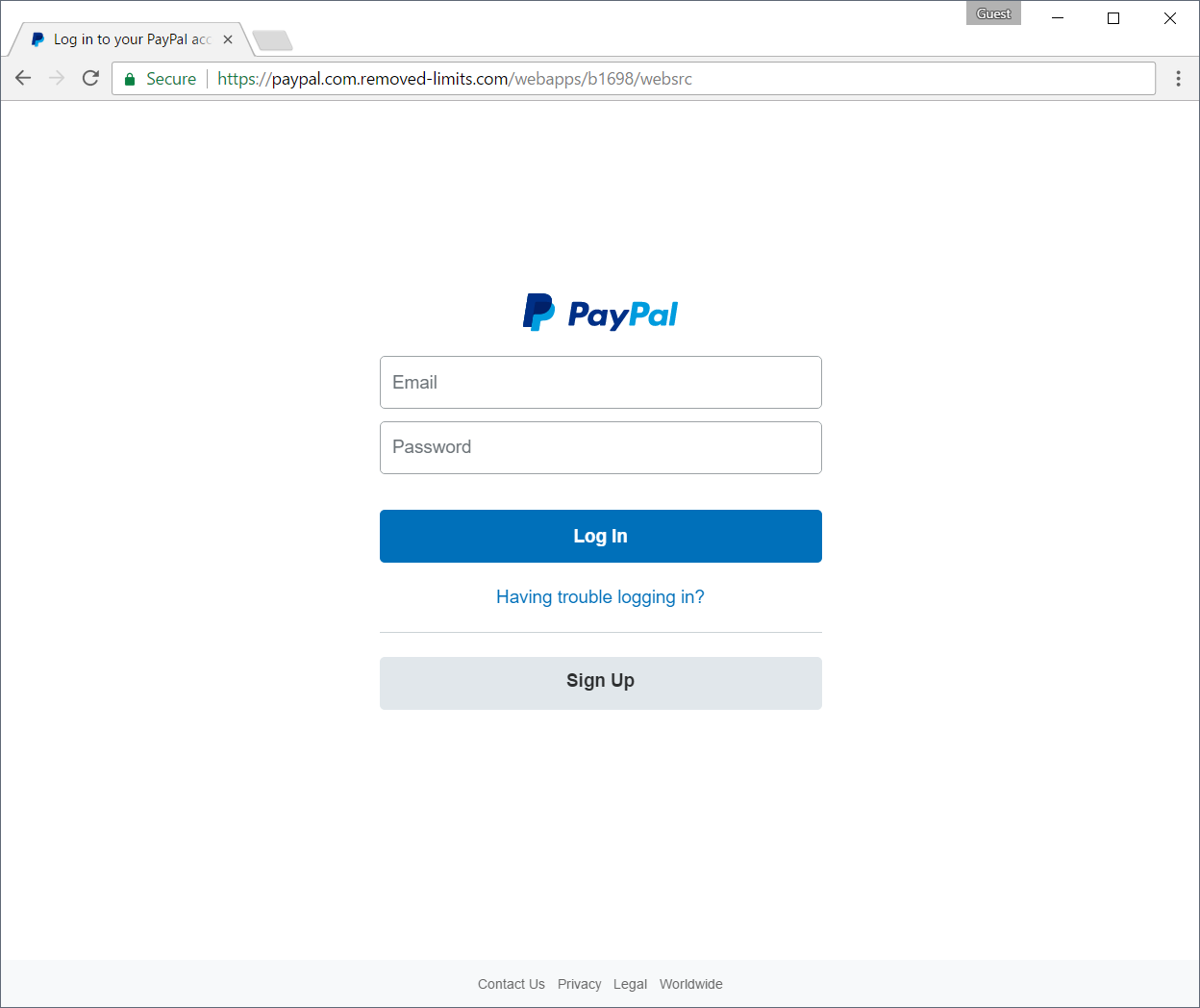

The results of encouraging users to feel safe and proceed on any encrypted site are obvious:

Chrome now explictly tells users the site is Secure. Considering that authenticity is part of security, the 'Secure' label is factually incorrect: the site is not authentic.

We're not the only ones to notice that 'Secure' is dangerous:

Sure 'Private' lost out, but the research never investigated what language communicated most accurately to users: instead, it was aiming to make them feel secure and proceed. User comprehension was never measured - in fact, Google's research mentions 'we tested a set of strings to see which helped comprehension the most.' before immediately doing something completely different - testing strings which would encourage users to feel safe and proceed - a few paragraphs later.

We obviously have some interest here: HTTPS authenticity is our job. So it's fair to point out another Chrome engineer was asked about this and replied:

This is an interesting response. So let's apply some data driven decision making:

This is certainly measurable. I'd be surprised if most users expect this limitation. Perhaps a more productive question: would there be a better way to communicate 'secure from eavedropping' to users?

Although the 'Secure' indicator should go away soon as positive indicators for encryption are phased out for negative indicators for HTTP, it's still worrying the largest browser vendor favored encouragement to proceed over safety.

Finally, while 'Rethinking connection security indicators' is flawed, at least Google are publishing user research on some of their browser security indicators. Other browser vendors have user research teams but we couldn't find anything published at all on the topic. CAs also have have the resources available to contribute their own research - and browser patches implementing the suggested outcomes - and don't do so.

We need more research, that genuinely focusing on helping user comprehension, from everyone.